Week 06: Review

2026-02-02

Multiple-Choice Questions

Question 1: Hash Functions

Which of the following properties should a good hash function NOT possess?

- Uniformity: It should distribute the keys uniformly across the hash table.

- Randomness: It should map the same key to a different location in the hash table each time it is computed.

- Independence: It should generate the hash value of each key independently of any other key.

- Efficiency: It should require minimal time and resources to compute the hash value.

Answer 1: Hash Functions

The correct answer is II. A good hash function should deterministically map the same key to the same location in the hash table to ensure that data can be reliably retrieved.

The other properties (I, III, and IV) are desirable characteristics of a good hash function.

Question 2: Open-Address Hash Tables Using Linear Probing

How many distinct probe sequences are used in open-address hash tables with linear probing, assuming that the hash table has \(m\) slots?

- \(\lfloor m / 2 \rfloor\)

- \(m\)

- \(m^2\)

- \(m!\)

Answer 2: Open-Address Hash Tables Using Linear Probing

The correct answer is II. In open-address hash tables with linear probing, there are \(m\) distinct probe sequences, one for each slot in the hash table. Each probe sequence starts at a different initial hash index and continues linearly through the table until an empty slot is found or the key is located.

Linear probing samples only a small fraction of all \(m!\) possible probe sequences.

Question 3: Hash Table with Chaining

What is the average running time of an insertion into a hash table if chaining is used, assuming that the load factor \(\alpha\) is \(\Theta(1)\)? In the expressions below, \(n\) stands for the number of keys already in the hash table.

- \(\Theta(1)\)

- \(\Theta(\log n)\)

- \(\Theta(n)\)

- \(\Theta(n \log n)\)

Answer 3: Hash Table with Chaining

The correct answer is I because, on average, each bucket in the hash table contains \(\alpha = \Theta(1)\) keys. Although all keys in a bucket may have to be checked during insertion, the asymptotically constant average bucket size allows for \(\Theta(1)\) insertion.

Generally, the average-case time complexity for insertion in a hash table with chaining is \(\Theta(1 + \alpha)\), which simplifies to \(\Theta(1)\) when \(\alpha = \Theta(1)\).

Question 4: Searching a Red-Black Tree

What is the worst-case running time of a search for a node in a red-black tree containing \(n\) nodes?

- \(\Theta(1)\)

- \(\Theta(\log n)\)

- \(\Theta(n)\)

- \(\Theta(n \log n)\)

Answer 4: Searching a Red-Black Tree

The correct answer is II. In all binary search trees, the worst-case running time for searching a node scales as \(\Theta(h)\), where \(h\) is the height of the tree. Because the height of a red-black tree is \(\Theta(\log n)\), the worst-case running time is \(\Theta(\log n)\) for this self-balancing tree type.

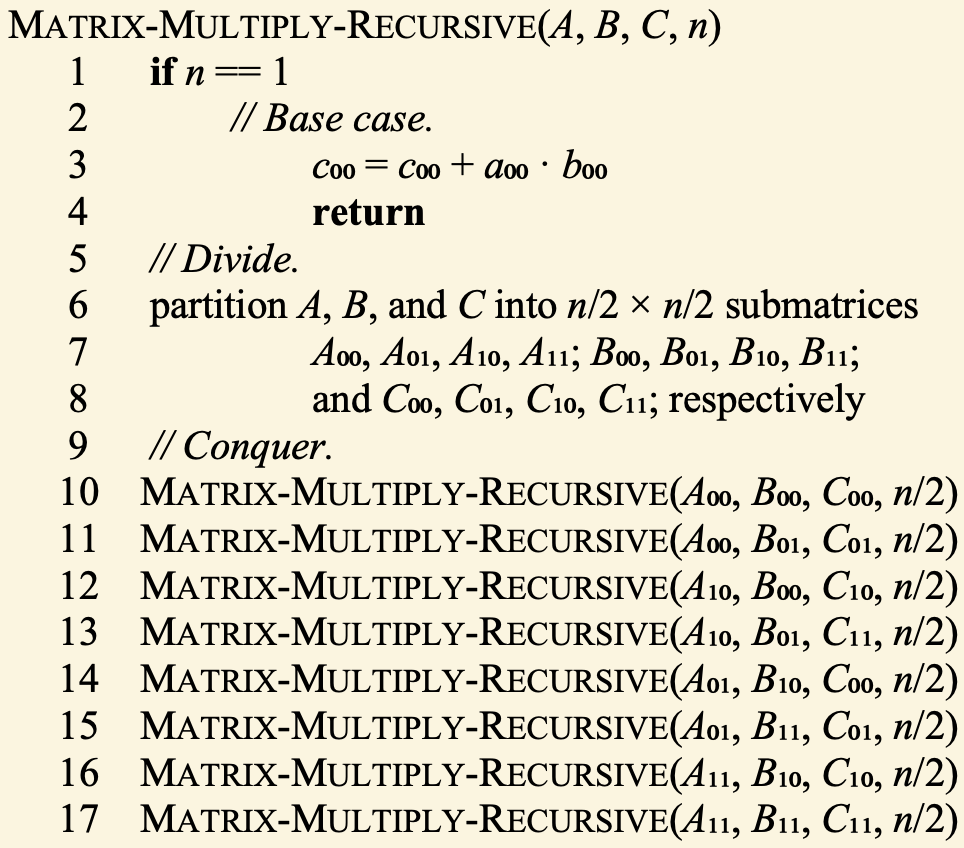

Question 5: Recursive Matrix Multiplication

Consider the Matrix-Multiply-Recursive(\(A\), \(B\), \(C\), \(n\)) procedure defined on the right. Which recurrence relation correctly describes its running time \(T(n)\)?

- \(T(n) = 8T(n/2) + \Theta(n)\)

- \(T(n) = 8T(n/2) + \Theta(n^2)\)

- \(T(n) = 4T(n/2) + \Theta(n)\)

- \(T(n) = 4T(n/2) + \Theta(n^2)\)

Answer 5: Recursive Matrix Multiplication

The correct answer is II. Matrix-Multiply-Recursive divides each of the two input matrices into submatrices of size \(n/2 \times n/2\) and makes eight calls to itself. The additional work done outside the recursive calls, which involves adding the resulting submatrices together, takes \(\Theta(n^2)\) time.

According to the Master Theorem, the running time of the recurrence relation \(T(n) = 8T(n/2) + \Theta(n^2)\) is \(\Theta(n^3)\).

Heap Heights

Exercise: Heap Heights

Consider the max-heap depicted below:

- What is the height of the node with value 74 in this max-heap?

- What is the height of this max-heap?

Solution: Heap Heights

By definition, the height of a node in a tree is the number of edges on the longest downward path from the node to a leaf. Because 74 is a leaf, its height is 0.

The height of a heap is the height of the root node. In this example, the height is 3.

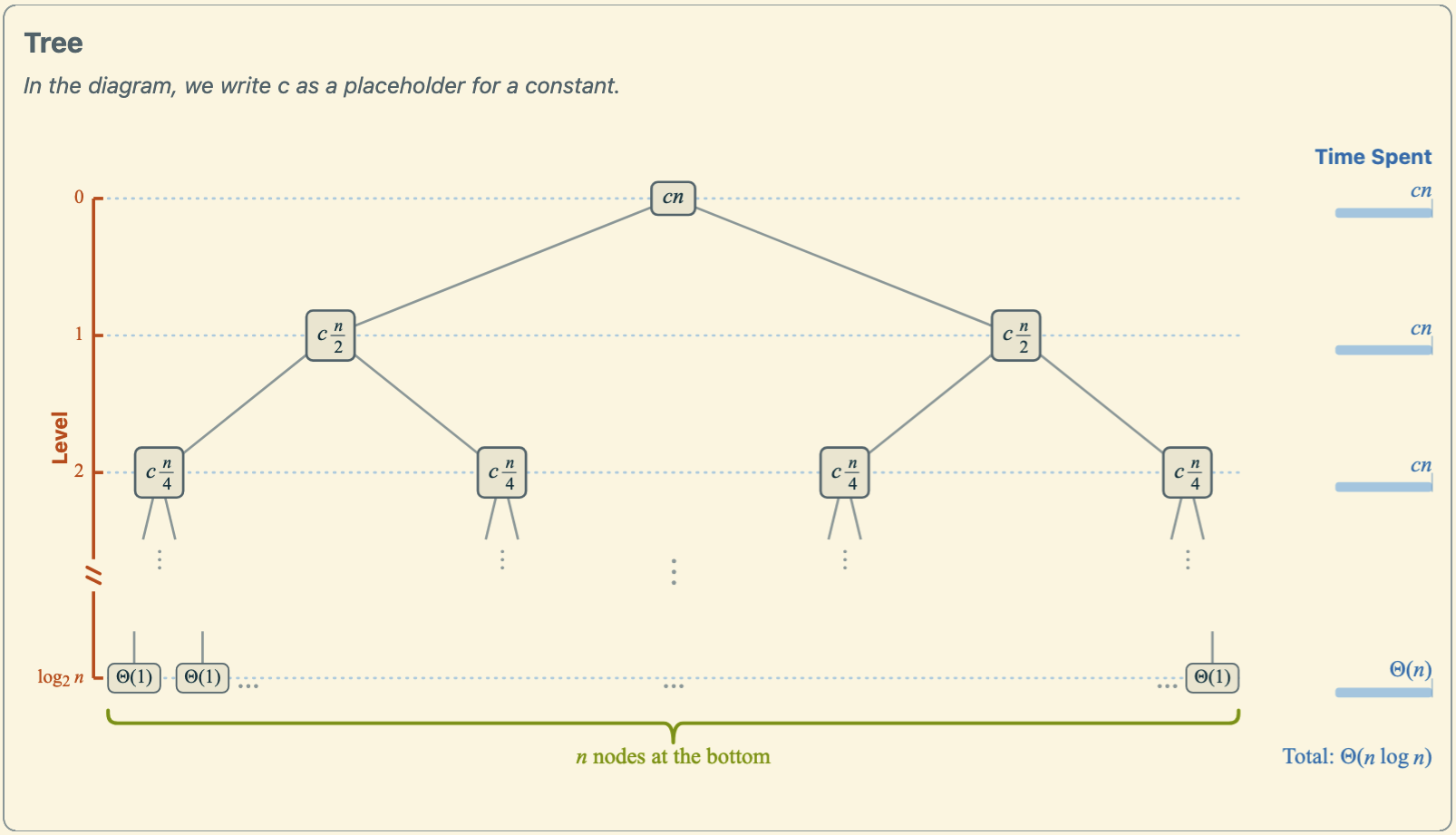

Recursion Trees

Exercise: Recursion Tree for Merge Sort

The running time of merge sort, applied to an array containing \(n\) numbers, follows the recurrence relation

\[\begin{equation*} T(n) = \begin{cases} \Theta(1) & \text{if } n=1, \\ 2T(n/2) + \Theta(n) & \text{if } n>1. \end{cases} \end{equation*}\]

- Sketch the recursion tree. Clearly indicate the total cost at each level, the number of levels in the recursion, and the number of leaves at the bottom of the tree.

- Derive the total running time of the algorithm in Θ-notation using either the recursion-tree method or the Master Theorem. Show your working.

Solution, Part a: Recursion Tree for Mergesort

Solution, Part b: Derivation of Mergesort’s Running Time

In the recursion tree, each level has a total cost of \(\Theta(n)\). The number of levels in the recursion is \(\log_2 n + 1\), which can be seen from the number of times \(n\) can be divided by 2 until it reaches 1. In each of the top \(\log_2 n\) levels, the cost is \(\Theta(n)\), and the bottom level has a cost of \(\Theta(1)\) per leaf, with \(n\) leaves in total. Thus, the total cost at the bottom level is also \(\Theta(n)\). Summing the costs across all levels gives \(T(n) = \Theta(n) \cdot (\log_2 n + 1) = \Theta(n \log n)\).

Alternatively, we can derive the same result from the Master Theorem. Because \(a = 2\) and \(b = 2\), we have \(p = \log_b a = 1\). Furthermore, \(f(n) = \Theta(n) = \Theta(n^p)\). Therefore, by case 2 of the Master Theorem, we conclude that \(T(n) = \Theta(n \log n)\).

Running Times

Exercise: Running Times of C++ Functions

What is the worst-case asymptotic running time in Big-Theta notation \(\Theta(f(n))\) for each of the following C++ functions? Use the simplest expressions for \(f\) possible.

Solutions are at the end of this section.

Examples 1 and 2: Running Times of C++ Functions

1.

2.

Examples 3 and 4: Running Times of C++ Functions

3.

4.

Example 5: Running Times of C++ Functions

5.

Solutions: Running Times of C++ Functions

- \(\Theta(n)\)

- \(\Theta(n^3)\)

- \(\Theta(\log n)\)

- \(\Theta(n)\)

- \(\Theta(n)\)

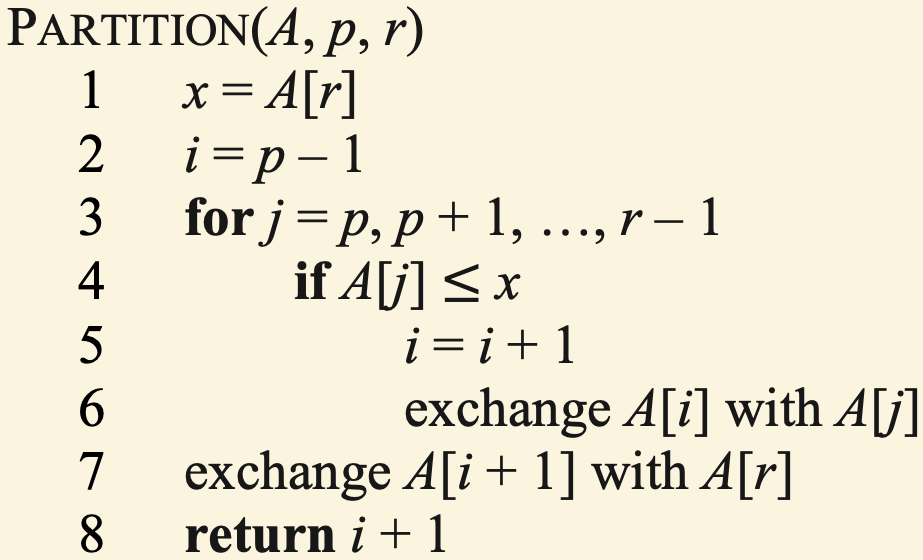

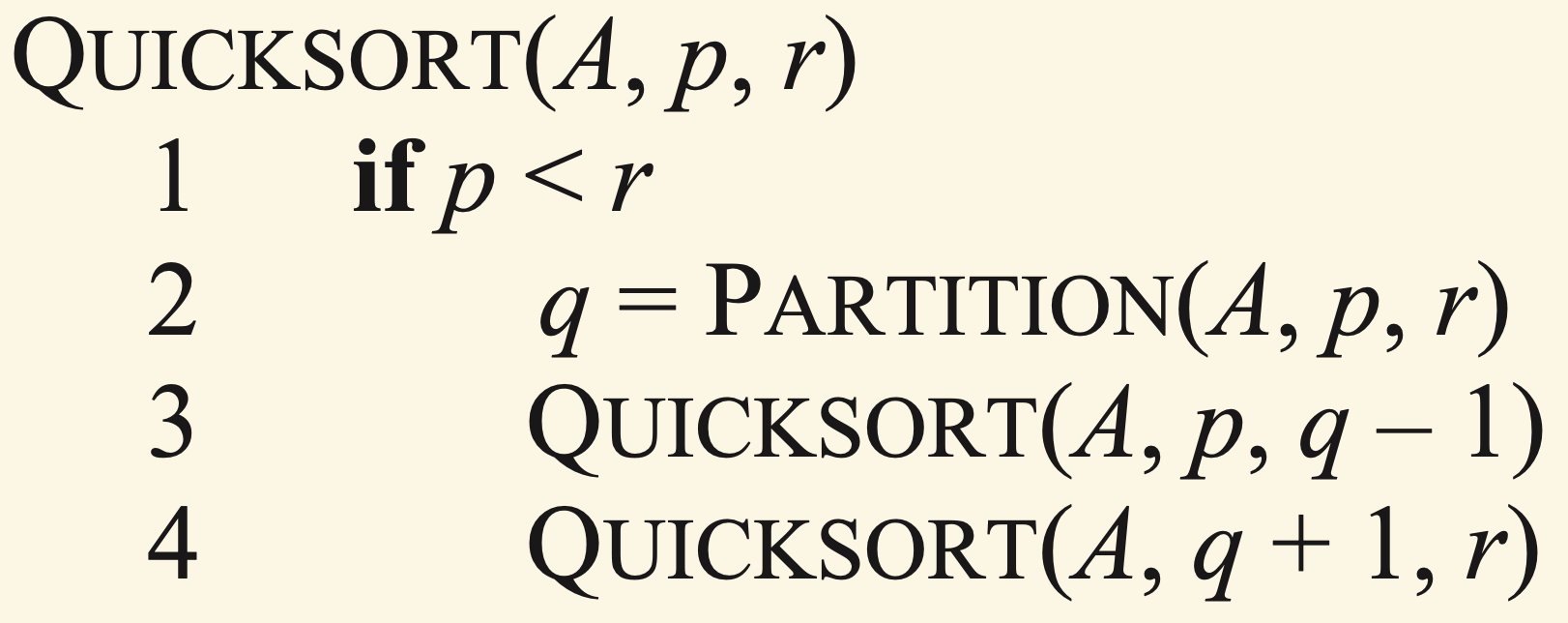

Quicksort

Quicksort Pseudocode

Exercise: Quicksort’s Partitioning Procedure

The figure below illustrates the steps involved in the Partition procedure, which is part of the Quicksort algorithm, applied to the input array \(\langle 5, 0, 7, 4, 8, 9, 2, 6\rangle\). You can find the pseudocode of the Partition procedure on the previous slide:

Illustrate the steps of the Partition procedure applied to \(\langle 6, 1, 2, 7, 4, 3, 5\rangle\) in a similar manner. You do not need to use colors, but indicate the locations of \(i\), \(j\), \(p\), and \(r\).

Solution: Quicksort’s Partitioning Procedure

Max-Heaps

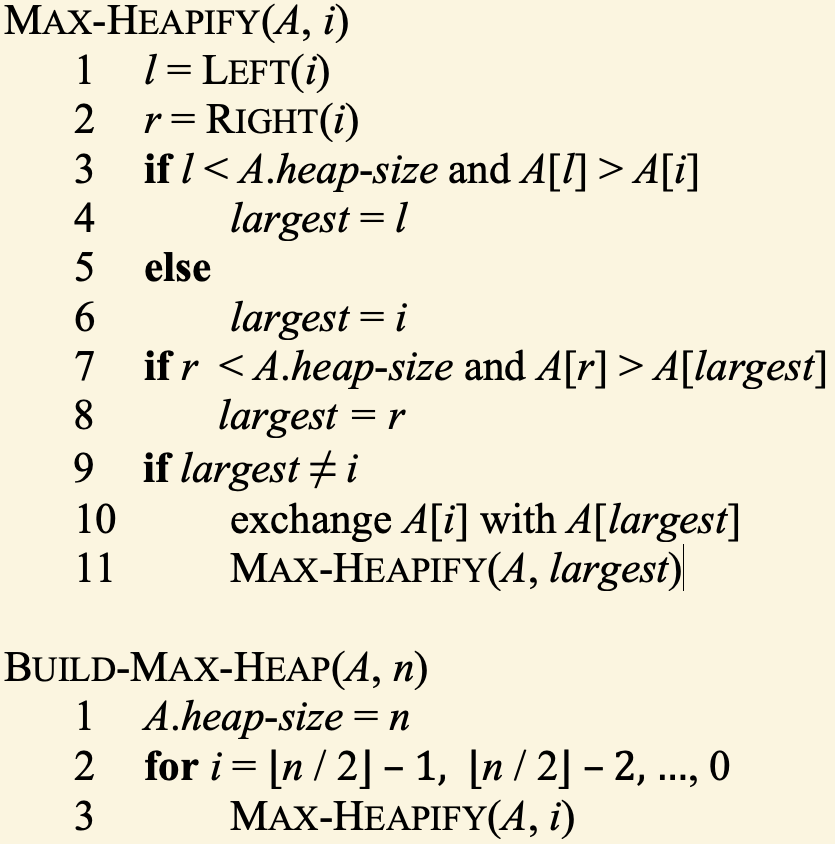

Pseudocode for Max-Heaps

Building a Max-Heap

Apply Build-Max-Heap to the array \(A = \langle 2, 1, 5, 6, 7, 9, 4\rangle\). You can find the pseudocode for Build-Max-Heap on the previous slide. Show your working and the end result as a tree.

Solution: Building a Max-Heap

Hash Tables

Exercise: Open-Address Hash Tables

Consider inserting the keys 94, 34, 54, 46, 41, 45, 13, 7 and 79 into a hash table of length 11 using open addressing. Illustrate the result of inserting these keys using:

linear probing with the hash function \(h(k, i) = (k + i) \text{ mod } 11.\)

double hashing with the hash function \(h(k, i) = (h_1(k) + i \cdot h_2(k)) \text{ mod } 11,\) where \(h_1(k) = k\) and \(h_2(k) = 1 + (k \text{ mod } 10).\)

Solution: Open-Address Hash Tables

a. Linear probing:

b. Double hashing:

Red-Black Trees

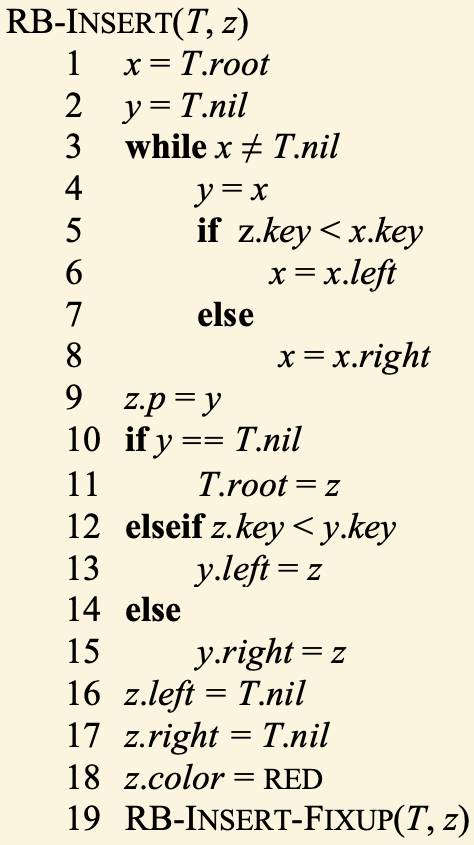

Insertion into Red-Black Trees: Pseudocode

Exercise: Insertion into a Red-Black Tree

Consider the red-black tree below:

Using the red-black tree insertion algorithm on the previous page, show the tree after inserting a node with key 63. Show your working and final answer.

Solution: Insertion into a Red-Black Tree

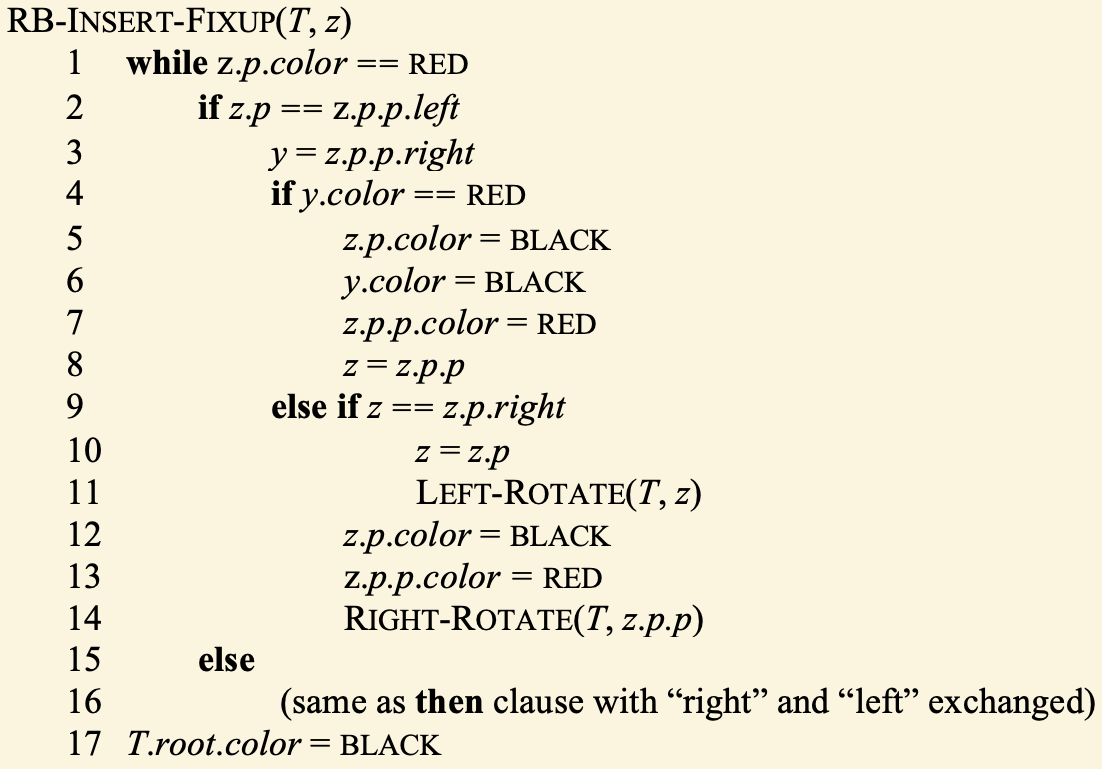

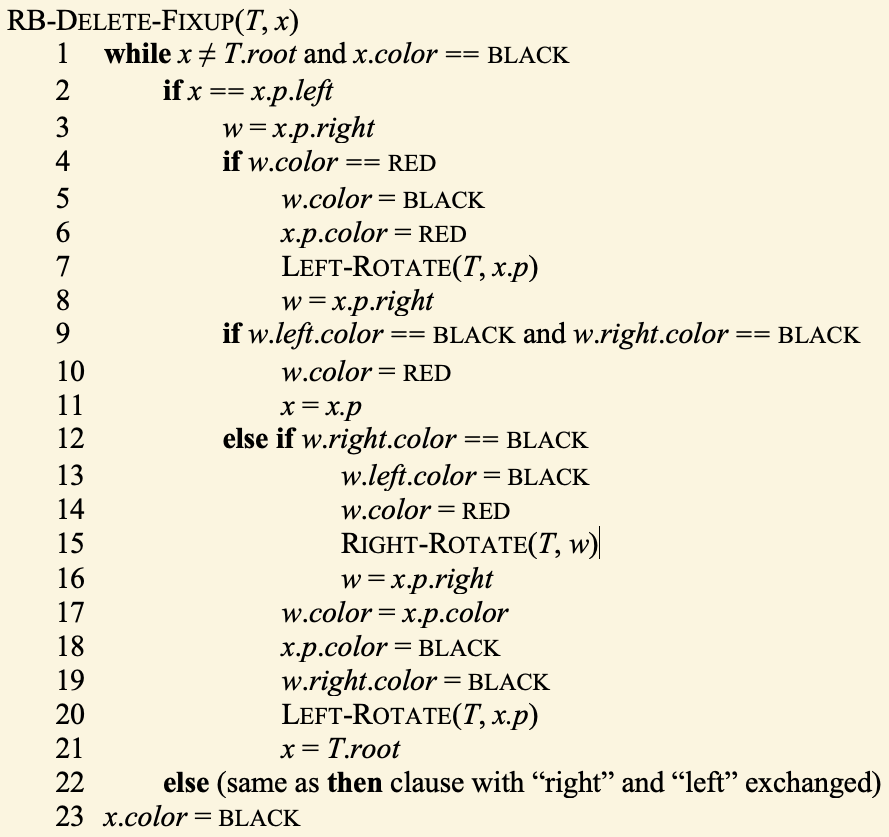

Deletion from Red-Black Trees: Pseudocode

Exercise: Deletion from a Red-Black Tree

Consider the red-black tree below:

Using the red-black tree deletion algorithm on the previous page, show the tree after deleting the node with key 60. Show your working and final answer.

Solution: Deletion from a Red-Black Tree

Week 06: Review